If you set out to build a new WAF today (which, believe it or not, people are still doing), everyone would have some idea of how it would work -- you'd setup a reverse proxy, and then use signatures of all kinds on the parameters, headers, body, etc., to detect attacks. And then, based on those signatures or analysis, you'd block or log accordingly. Maybe you'd wait to collect a few requests before you did something so you'd be sure that this is an attack.

However, I think if we polled 100 appsec practitioners in our community, man-in-the-street style, and asked them to describe how RASP works, we'd probably get many different and hilarious answers.

Last year we spoke at Microsoft's Bluehat conference about some new and original research we productized into our existing Contrast Protect Java agent. We call the result of this research Runtime Exploit Prevention. Let's call it REP. The acronym was chosen because it's much like an application-layer version of Data Execution Protection, which is wildly successful in providing on-by-default protection against several bug classes. This is a shameless act of subliminal association on our part. We worship Solar Designer here.

We're now porting all of our agents to this new framework after a year of experimentation. So, now even *our* product works differently than *our* "RASP v1" worked. Given all this, we wanted to take some time to help folks understand RASP.

People tend to think of RASP as "WAF, but in the app". No, not really -- not anymore than a smoke detector is a fire truck, but in the house. This is the first in a series called "Pulling Back the Curtain on RASP", in which I, against the advice of our well-meaning patent attorneys, will talk openly about how our Protect product works -- centering around how our rules and other features work. I hope by giving some transparency, we can drive people to experiment for themselves with our freemium called Community Edition and fast-forward the normalization of this space, because the world desperately needs RASP, Contrast or not.

We'll talk about what how RASP works, what it's good at, what WAF is good at, and everything in between, until every one of you unsubscribes, buys a license, or uses the freemium. Other rejected titles for the series included, "How to Build a RASP For Yourself And Not Pay Us", and, "7 Things Our IP Lawyers Said We Should Keep to Ourselves".

What I hope to demonstrate through the series is these 2 key principles:

In a WAF, you have one channel through which you can play -- the HTTP communication. In a WAF, you have 2 options for reaction: block the request or fiddle with the response. Obviously, this severely limits the amount of moves you can make. In a RASP, you have no such limitations. You can do whatever the programmer can do.

Our first case study in this series explores deserialization attacks. Deserialization is taking a bunch of binary bits, and reconstituting an object from them. You can, as we've talked about before in previous research, repurpose types' special deserialization logic into doing evil stuff.

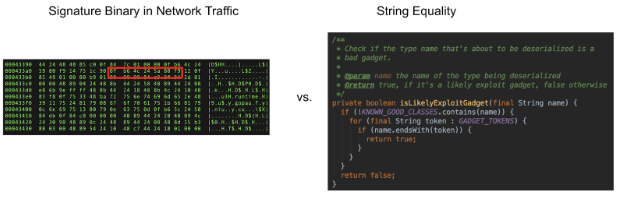

To stop deserialization attacks, a WAF, which is probably written in C, C++ or Rust (well, let's settle on "not the languages people generally write webapps in"), would have to try to signature subsets of the binary of serialized objects to look for known evil gadget types. After all, you can't just block any serialized data, because then the app won't work. They'd also have to hope that there's no encoding monkey business in the traffic or deserialization specification that would make the attack invisible to signaturing. History doesn't reflect well on strategies like that.

I can’t speak for others, but I can tell you how we do it. I’ll talk about Java since that’s what I know. We employ two strategies: block known, evil gadgets, and sandboxing the deserialization process from known exploit behavior.

The first thing we do, using binary instrumentation, is add callbacks in Java’s native deserialization and other serialization libraries including Jackson, Gson, XStream, and others. Specifically in the code that processes the bits and creates new objects from them.

It’s obvious to see why looking for gadgets is more accurate at the point when the deserializer code running. All of the network, encoding, endianness, buffering, storage, and the rest of the data lifecycle of the attack payload is totally irrelevant -- we can block it when the exploit is about to occur.

Although this is clearly way better signaturing because it’s signaturing with strong types, at the point of exploitation, it’s still signaturing. We’re still vulnerable to 0days -- attacks that use gadgets previously unknown to the community. Let’s look at our handy REP framework to see if there’s another opportunity to secure deserialization:

| Input Classification | Volumetric Analysis | Input Tracing | Semantic Analysis | Hardening | Sandboxing |

| Identify clear attacks and prevent processing. | Identify patterns of activity that represent an attack. | Identify when external input introduces code that will run in an interpreter. | Detect self-harming behavior through language analysis. | Enable, improve,and enhance existing security controls. | Prevent access to powerful functions from risky subsystems. |

Sandboxing! Using instrumentation we can sandbox the deserialization process in some interesting ways that can provide really strong security guarantees.

By adding sensors to different subsystems, we can enforce code separation. For instance, if we want to say “deserialization code should never lead to a new system command”, we can use a ThreadLocal (a variable that has a value for each individual thread) and three sensors to do it.

Let’s imagine we put Sensor A in the beginning of a deserialization. Sensor A marks a ThreadLocal denoting we are in a deserialization process.

Then, we put Sensor B in the code that occurs at the end of deserialization. Sensor B just clears out the ThreadLocal, denoting that we are not in a deserialization process.

Finally we put Sensor C in the code for starting a new system process. Sensor C checks to see if the ThreadLocal was set by Sensor A -- if so, it means we are in a deserialization process, and this is definitely an attack.

server.handleRequest()...com.acme.ProcessUserEnty.handle(ProcessUserEntry.java:135)...java.lang.ObjectInputStream.readObject(ObjectInputStream.java:250)(method we want to sandbox)...java.lang.Runtime.exec(Runtime.java:142)(methode to whom we block access w. Sensor C)

With 3 sensors adding just a few CPU cycles, we’ve created an incredibly hard to beat sandbox for deserialization. The new deserialization controls presented in JEP-290 are aimed at a slightly different problem than we are -- that feature is attempting to restrict the amount of resources deserialization can produce, or the types it can generate. This, on the other hand, is a novel protection that assume those protections can be bypassed, and still protect you from exploitation. Running java.lang.Runtime#exec() isn’t the only attack vector, but hopefully you get the idea here -- instrumenting across components can provide huge security benefits.

I hopefully presented a challenge to your position on RASP today. What’s more scalable, us continuing our research, improving every day, and providing the best possible attack protection to you as a service, or teaching your developers the ins-and-outs of deserialization attacks, protections, etc., forever, and hoping they get it right?

I hope you enjoyed this tour, and hopefully I made the case that RASP provides superior protection to WAF in terms of deserialization.

Stay tuned here as we go through more vulnerability classes!

P.S. Because these protections occur at the JVM level, and not with any higher level language constructs, they install and work the same way for all languages that run on the JVM, like Java, JRuby, Jython, Grails, ColdFusion, Scala, Groovy, Kotlin, etc.

Get the latest content from Contrast directly to your mailbox. By subscribing, you will stay up to date with all the latest and greatest from Contrast.